I’ve been so fortunate to have been invited as official blogger at the GOTO Aarhus 2012 conference this year, which most likely means a number of new readers will be reading this blog post, so I’ll start by giving a short introduction to myself.

10 years ago I shifted from “regular” software development to software testing with a job as SDET (Software Development Engineer in Test) in Microsoft Vedbæk (Denmark), after which a couple of years followed as software developer (but still with focus on test and quality assurance) until I started in my current job as technical lead for test automation in ScanJour two years ago. We run Scrum in teams of 5-8 persons, with usually two domain (manual) testers and one automation tester in each team. The role of the automation tester ranges from automating already defined manual functional test cases to performing load and performance tests.

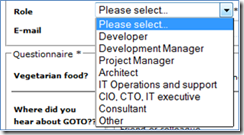

Now I’ve got the chance to participate in a GOTO conference, which seems to be a true developer conference, by not having “Tester” as an option in the Role field when you register – I registered myself as “Other”

So what’s the state of test automation among developers?

During the last 10-12 years in software development, I’ve seen how unit tests has helped to increase the quality of the products we ship, by often ensuring the most obvious bugs being caught early, but not least ensuring the individual classes/units are actually testable (otherwise it wouldn’t be possible to write unit tests against them).

But I still face problems when trying to write automated tests, due to lack of “testability” of the products in the following areas:

- No silent installation/uninstallation, or not officially supported (so if you report bugs, they are closed “by design”)

- Missing unique id’s on controls in webpages when doing UI tests, which makes it harder to write test automation and makes test cases less stable

- No clear testable layers in the application, which often means you have to resort to doing automation on the UI layer

- Test is not done in parallel with development, but sometimes when the developer has started working on a new task or even in a later sprint (unfortunately)

#4 is not only a developer-issue, but still a problem for the team, because it means that you risk finishing/shipping code, which either contains bugs or you find out is not testable.

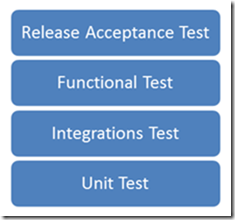

Inspired by ISTQB (a certification for testers), I see the following levels of software tests (simplified, there also exists load test etc.), with unit tests being closest to the code and of course automated, and release acceptance testing often (but not necessarily) being a manual testing activity.

I highly value that testing should be independent from development (even if part of same Scrum team), simply to get another pair of eyes to look at the system; I’ve seen many “idea-bugs” (not understanding customer needs correctly) being caught early by domain testers, that could otherwise have easily slipped all the way to the customer (where the cost of fixing it is higher).

Regarding the issues I raise above concerning lack of testability, I think many of these could be solved by having the software developers developing more automated functional testing, in the same way that unit tests ensures some degree of testability of units/classes. After all it’s in the interest of the whole team to deliver working software to the customers, as effectively as possible.

So the questions I’ll try to get answers to while being at GOTO Aarhus 2012 conference are:

- What’s the state of automated functional test at all?

- Are software developers already automating non-unit tests like e.g. functional UI tests?

- Is it common to have automation testers in the industry (like my current job), is automation a part of usual software development activities or have you actually been able to successfully do capture/replay test automation maintained by domain/manual tester? (I would be surprised if the latter is case)

You are certainly already now welcome to give me input to these topics, by leaving a comment below, thanks.

PS. If you are interested in what GOTO conference sessions a tester finds interesting, you can see my schedule here.